BUILD YOUR OWN

RESOURCE LIBRARY

Special Reports

SusHi Tech Tokyo 2024: experience ‘Tokyo 2050’ todaySponsored by The SusHi Tech Tokyo 2024 Showcase Program Executive Committee

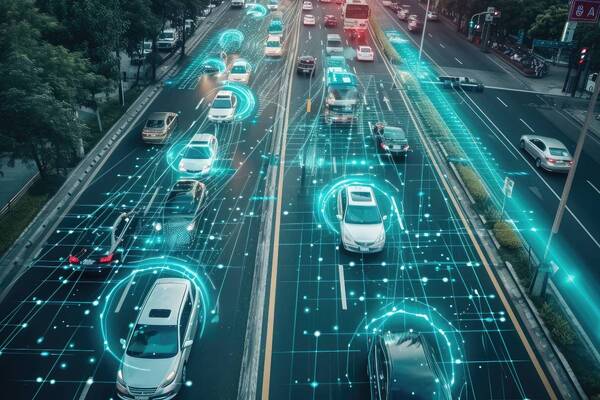

Connected car tech helps drivers see the invisible

Nissan merges real and virtual worlds. Digital character copyright Unity Technologies Japan/UCL

Oh no, sadly you have viewed the maximum number of articles before we ask you to complete some basic details. Don't worry, it's free to register and won't take you longer than 60 seconds!

Latest City Profile

SmartCitiesWorld Newsletters (Daily/Weekly)

BECOME A MEMBER

We use cookies so we can provide you with the best online experience. By continuing to browse this site you are agreeing to our use of cookies. Click on the banner to find out more.