Podcasts

Cities Thriving on Lighting, Episode 2 – Simplicity by design in smart lightingSponsored by Paradox Engineering

Cities Thriving on Lighting, Episode 1 – Lighting as the backbone of the smart citySponsored by Paradox Engineering

Opinions

Transforming indoor public safety with smart sensor networksSponsored by Motorola Solutions

Bridging the data gap in urban climate design

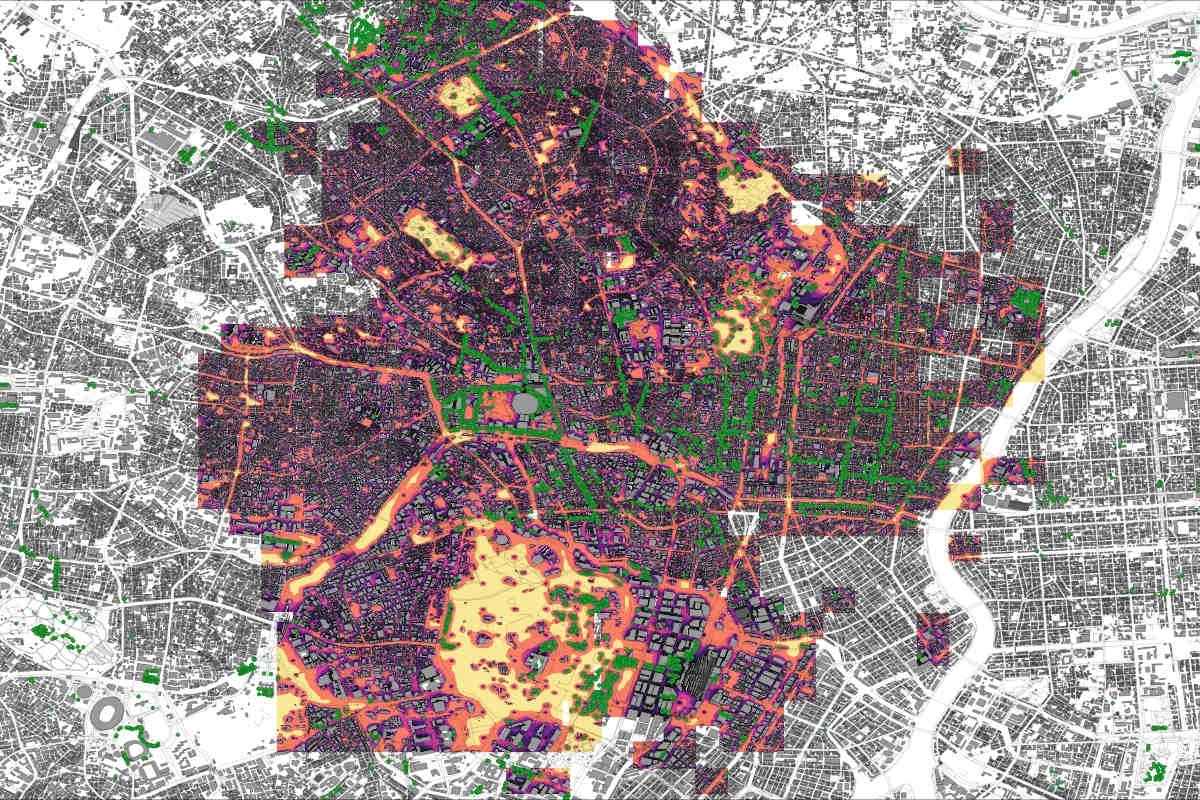

Oana Taut, co-founder and CPO of infrared.city, explains how AI, data and simulation are helping cities move beyond long-term climate ambitions towards immediate, measurable action.

Cities don’t lack climate ambition – they lack the tools to act on it. Working over several years with multiple stakeholders, Oana Taut, co-founder and chief product officer (CPO) of infrared.city, encountered a recurring challenge – the inability to objectively compare solutions or clearly communicate why one approach was better than another. This frustration, compounded by a reliance on intuition and aesthetics in the absence of data, ultimately pushed her to rethink her role as a designer.

Shifting away from practice, Taut began exploring artificial intelligence (AI) as a way to navigate the complexity of the built environment and support more informed decision-making. What started as a search for better tools evolved into a mission to build them – leading her to co-found infrared.city, where she now focuses on developing a platform that enables cities and planners to test, understand and act on climate challenges with far greater clarity.

SmartCitiesWorld (SCW): Which areas of climate change are cities prioritising today – and where are the biggest gaps?

Oana Taut (OT): From what we see, cities are prioritising the right impacts. They are concerned about heat mitigation, climate change drivers, circularity and waste. But there is a lack of data-informed measures that can be applied immediately. So, cities tend to focus on what they can already measure. For example, vehicle electrification is easier to track – you can quantify how much of the fleet has been electrified. Energy transition and renewables are also more straightforward to measure across building stock.

But when it comes to urban heat island mitigation or identifying climate shelters, there is strong interest but not enough data to support decisions or justify investment. Cities know they have a problem, but don’t know how a specific intervention will impact it.

This is the gap we are trying to close – not just by providing data but enabling decision-making tools that show how a specific action will affect outcomes.

SCW: What role do AI and simulation play in transforming urban planning?

OT: It’s a bit of a chicken-and-egg problem. The increased availability of data has enabled the development of AI, and now AI is required to make sense of all the available data.

Data itself is no longer the issue – even small municipalities typically have data. The real challenge is interpreting and using it actively for decision-making.

There’s also an important distinction between data and simulation. Data helps us understand what has happened. With AI, you can find correlations and patterns in historical data. But data alone doesn’t answer “what will happen if…?”

AI can analyse these complex relationships with a speed and reliability far beyond what a human can process, identifying patterns across multiple datasets

That’s where simulation becomes crucial. Cities sometimes say they don’t need simulation because they already have heat maps or satellite readings, but it’s not either/or – it’s both. Data informs simulation, and simulation allows you to test scenarios: What happens if we plant trees here? What happens if we introduce shading in this area?

Another key role of AI is turning multiple layers of data into combined actionable insights. For example, if you overlay heat data with demographic and density data, you can identify not only where interventions will reduce temperature, but where they will benefit the most vulnerable populations.

AI can analyse these complex relationships with a speed and reliability far beyond what a human can process, identifying patterns across multiple datasets.

SCW: What strategies should cities adopt to create more sustainable and resilient environments?

OT: I would start with the role of AI in reducing technical barriers. As AI capabilities improve, technical skill becomes less of a limiting factor and human experience becomes more important. Our role shifts towards asking the right questions and guiding AI tools in a way that aligns with human needs and experience-driven intent.

This means smaller municipalities, even with limited budgets, can operate at a similar level to better funded cities if they have access to the right tools.

But in terms of strategy, I have an ongoing frustration with long-term plans. We’ve had strategies for 2010, 2020, 2025, 2030 – all with ambitious targets. But we often lack clarity on what is happening right now. We know the targets, but we don’t have the tactics.

I would rather focus on immediate actions – what can be done today with existing budgets. These tactics are what allow us to improve year on year and they are also what enable long-term success. For example, if we want to reduce urban heat by 2050, we need to plant trees today. If we delay action, we won’t meet those targets.

The overall strategies have their place, and are all ambitious – the issue is implementation. We need to focus on paving the way towards those goals through concrete actions from year one.

SCW: How should architects and planners approach climate resilience differently today compared to 10-15 years ago?

OT: The biggest shift is recognising that the greatest opportunity to improve sustainability is at the very beginning of the design process – before there is even a defined massing.

Traditionally, sustainability consultants are brought in after the design is set. At that point, they can only apply solutions on top of an already suboptimal design. These solutions cost money and have limited impact.

Instead, we need to integrate simulation into the early design stages. This allows designers to shape buildings for optimal performance from the outset. There is no sustainability layer that can fix a fundamentally flawed design.

What we need is a single source of truth – a shared dataset that can be accessed and interrogated by all stakeholders, from policymakers and developers to researchers

The good news is that there is strong motivation among designers to create sustainable buildings. What’s missing are the tools and processes to support that.

Another challenge is over-reliance on intuition. As architects gain experience, intuition can become a false sense of certainty. This is especially problematic when working in unfamiliar contexts – for example, designing in a completely different climate.

We need to start from data. That includes understanding that traditional meteorological data is based on historical conditions, while today we also need to consider forecasting data. With the right tools, we can simulate future climate scenarios and design accordingly.

The key is to evaluate early, use data from the start, and optimise building form and function before considering additional systems.

SCW: How is collaboration evolving between cities, planners and other stakeholders?

OT: Collaboration is a huge opportunity. Historically, data has been fragmented across stakeholders, and important information has often been inaccessible.

What we need is a single source of truth – a shared dataset that can be accessed and interrogated by all stakeholders, from policymakers and developers to researchers. This also ties into reducing the skill barrier. If data can be accessed and interpreted through intuitive tools, more people can participate effectively in decision-making.

Another critical aspect is transparency towards citizens. Traditionally, information has been curated and presented in ways that are not always accessible or relevant to the public.

With data-driven decision-making, there is an opportunity to be more transparent – to explain not just what decisions are made, but why.

We can even imagine platforms with multiple interfaces – one for decision-makers and another for citizens – where both can access relevant data in ways tailored to their needs. This kind of alignment can significantly accelerate decision-making.

The spatial component is also crucial. When data is presented geographically, it becomes much easier for everyone to understand the problem from the same perspective.

SCW: What is the role of historical data in a world of increasing climate uncertainty?

OT: Historical data remains essential. It is the foundation for generating future scenarios and forecasting models.

However, today’s challenge is that we rely on data we can validate and trust, and there is still no full consensus on the best methods for long-term climate forecasting. That’s why we often use multiple scenarios – for 2030, 2050 and beyond.

In practice, it’s important to use historical data as a baseline and then apply assumptions about future change. Personally, I prefer to look at simulation results in terms of patterns and gradients rather than absolute values.

For example, in urban heat simulations, the exact temperature value may change depending on the scenario, but the spatial patterns – where the hottest areas are – remain highly relevant.

With the right tools, you can evaluate the same design across both historical and multiple forecasted scenarios. This gives a more robust understanding of how interventions will perform over time.

The overall strategies are already there – we don’t need to rethink the targets. What we need is action. We need to move from high-level ambition to also focus on immediate, data-informed action. With the tools now available – especially AI enhanced simulation – we can make better decisions, faster, and with greater transparency.

The opportunity is not just to design better cities, but to create a shared understanding between stakeholders and citizens, and to align everyone around decisions that are grounded in data.